Vitis Video Analytics SDK Migration for DeepStream Users

Overview

If you know how to use NVIDIA DeepStream you already know how to use Vitis Video Analytics SDK! This article shows the similarities of the two flows while highlighting the advantages to be gained moving to a Xilinx solution.

Video Analytics and GStreamer

Video is everywhere. Public facilities, factories, medical equipment, all produce reams of video data. While in the past, using this data involved either a human or a simple computer vision algorithm, artificial intelligence and machine learning now enable computers to do a deeper, more intelligent analysis of video data. The process of doing that is called video analytics.

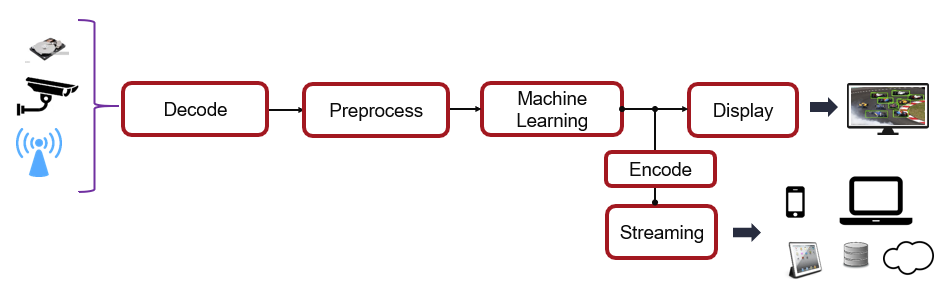

There is a number of ways to architect a video analytics solution but ultimately the concept of a video pipeline is frequently employed. Figure 1 shows an illustration of just such a pipeline.

Figure 1: Conceptual view of a video analytics pipeline

The GStreamer framework is an open-source framework that enables pipelines like this to be easily constructed. GStreamer was established to enable developers to link processing elements to build complex multimedia pipelines. Pipeline stages are called plugins. GStreamer has over a thousand plugins that can be used with ease on most Windows and Linux systems. These include just about any type of multimedia action you might want to take. There are plugins that do audio and video processing, file manipulation, filtering, and plenty more.

Systems with specialized hardware, such as the “Machine Learning” block in Figure 1, present challenges in getting support from the open-source community. Hardware vendors have unique and proprietary ways of doing machine learning and it’s not reasonable to expect the open-source community to help bring software support for these solutions to market. Vendors have, instead, built products on top of GStreamer to enable integration of these capabilities into GStreamer. NVIDIA has the DeepStream framework, Xilinx has the Vitis Video Analytics SDK.

In this article we’ll talk about implementing a simple Vitis Video Analytics SDK pipeline, demonstrating that, if you know how to use DeepStream, you know how to use Vitis Video Analytics SDK. We’ll do this by highlighting the features of custom VVAS plugins that enable their use with a standard GStreamer pipeline.

Inference Tool Comparison

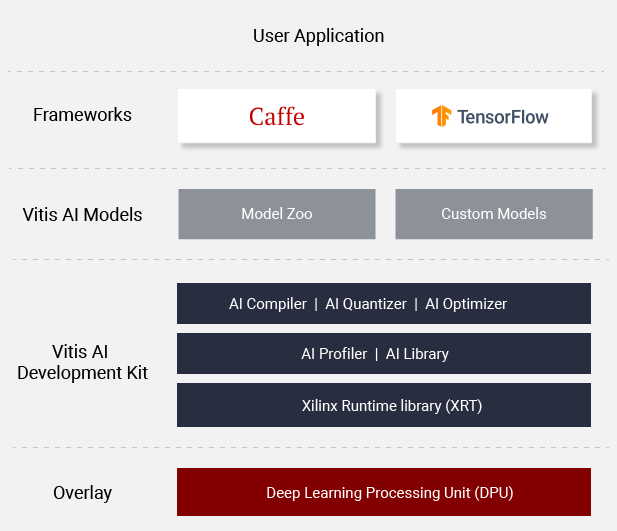

One of the features that make VVAS (and DeepStream) different from open-source GStreamer is the support of machine learning plugins. In both VVAS and DeepStream the plugins make use of the machine learning toolkits under the hood. DeepStream uses NVIDIA’s TensorRT. VVAS uses Vitis-AI. Both tools do fundamentally the same thing: translate machine learning networks into a form such that they will run on proprietary hardware. Xilinx Vitis-AI has the same functionality (Figure 2).

Figure 2: Vitis-AI Components

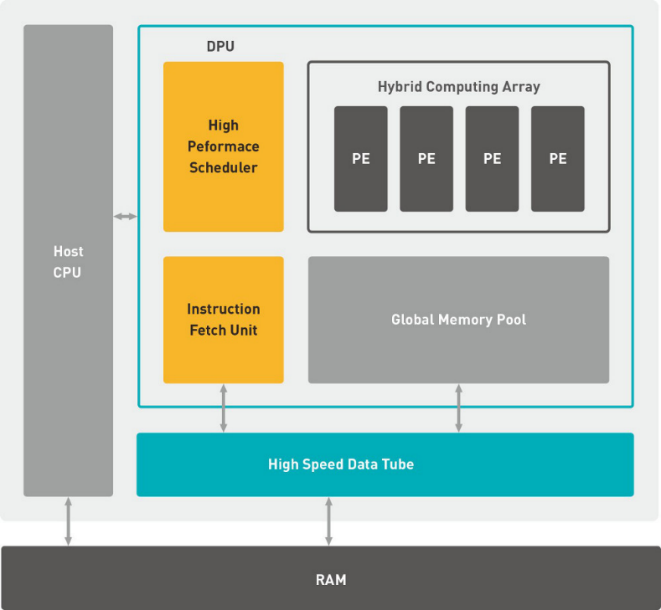

FPGAs and GPUs are inherently different, however. GPUs use an array of small processors to accelerate code execution by loading up memory and then processing many threads in parallel. Xilinx devices accelerate software and algorithms by building special-function hardware accelerators out of logic gates. In the case of machine learning, these logic gates implement the Xilinx Deep Learning Processing Unit or DPU. The DPU is a processor, optimized to execute CNN’s. (Consult the Xilinx Vitis-AI Model Zoo for a complete list of supported networks). Vitis-AI creates the software that executes on the DPU

Figure 3: High level block diagram of Xilinx Deep Learning Processing Unit (DPU)

While both TensorRT and Vitis-AI perform the same functions (compile, optimize, quantize, deploy), networks deployed on Xilinx hardware have a number of potential advantages. If the Xilinx network isn’t memory-bound, there may be advantages in both performance and latency. And, while the DPU consumes some logic in the FPGA it may not consume all of the logic. This leaves the rest of the FPGA’s logic to be implemented in other user functions and accelerators, allowing users to further differentiate their products. At the system level, for a given device family Xilinx almost always offers a greater variety of options in the areas of density, I/O, power, and performance.

Basic VVAS Machine Learning Pipeline

Now that we understand that a Vitis Video Analytics SDK machine learning plugin uses Vitis-AI under the hood, it is instructive to look at a complete Vitis Video Analytics SDKpipeline that uses this plugin.

Here is a simple Vitis Video Analytics SDK “hello world” pipeline example.

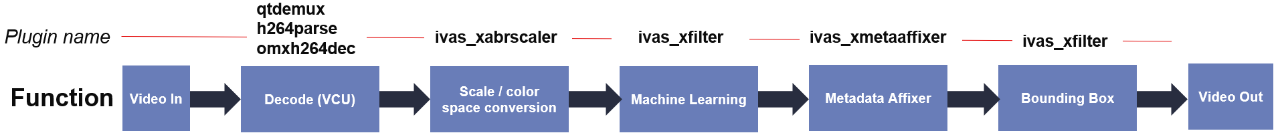

Figure 4: Basic VVAS pipeline

This pipeline has some open-source, standard GStreamer plugins, notably video in and video out, along with the plugins to control the VCU on the Xilinx device (qtdemux, h264parse and omxh264dec).

It also has the following custom Vitis Video Analytics SDK plugins. Vitis Video supports some additional functions in addition to machine learning. Some of these plug-ins run entirely in software on the host processor while the plugins shown as “Accelerated / yes” run in the FPGA logic.

Function |

Plug-in |

Accelerated? |

DS Equivalent |

Scale / color space convert |

ivas_abrscaler |

yes |

nvvideoconvert |

Machine Learning |

ivas_xfilter |

yes |

nvdsinfer |

Metadata Affixer (1) |

ivas_metaaffixer |

SW only |

na |

Bounding Box (2) |

ivas_xfilter |

SW only |

nvdsosd |

Table 1: Vitis Video Analytics SDK plugins used in example pipeline

Data flows through the pipeline from left to right. The video stream first gets decoded, then goes through some scaling and colors space conversion, a machine learning algorithm is run on the scaled, converted data, metadata from the processed data is applied to the original data stream, bounding boxes are superimposed on the video, and it’s displayed on a monitor.

Figure 5: Image with Face Detection

Video CODEC Unit Decodes Incoming Video

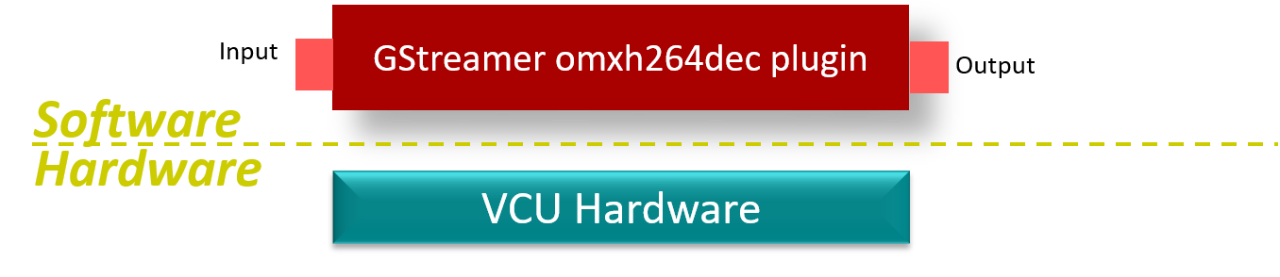

Some Xilinx FPGAs have VCUs (Video Codec Unit) built in, which accelerate h.264 and h.265 video encoding and decoding. The GStreamer qtdemux plugin strips the audio from the video stream while the h264parse and omxh264dec plugins collectively use the VCU to decode the input H.264 video stream into a format that the rest of the pipeline can use.

Figure 6: omxh264dec plugin

Preprocessor Plugin: ivas_abrscaler

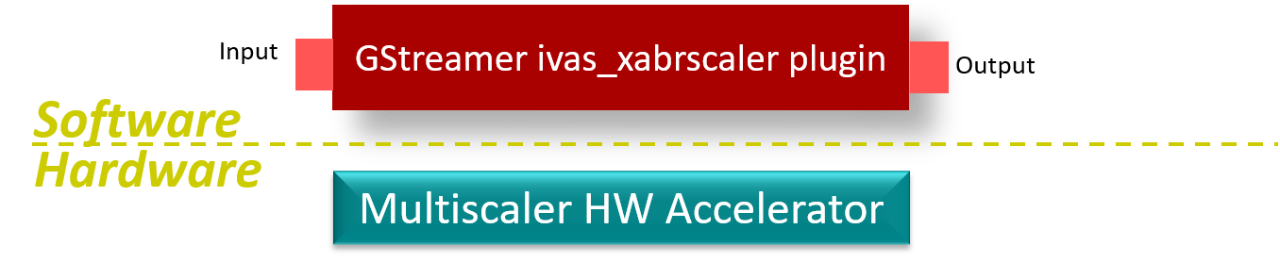

Different machine learning models will have different requirements on the incoming video. As such the video might need to be resized, scaled, normalized, it might need to be in a different color space, etc. Although all these operations can be achieved in software, the ivas_xabrscaler plugin sits on top of a programmable logic-based block of hardware called the multiscaler. The multiscaler HW accelerator accelerates these functions by implementing that functionality in FPGA gates.

Figure 7: ivas_xabrscaler plugin

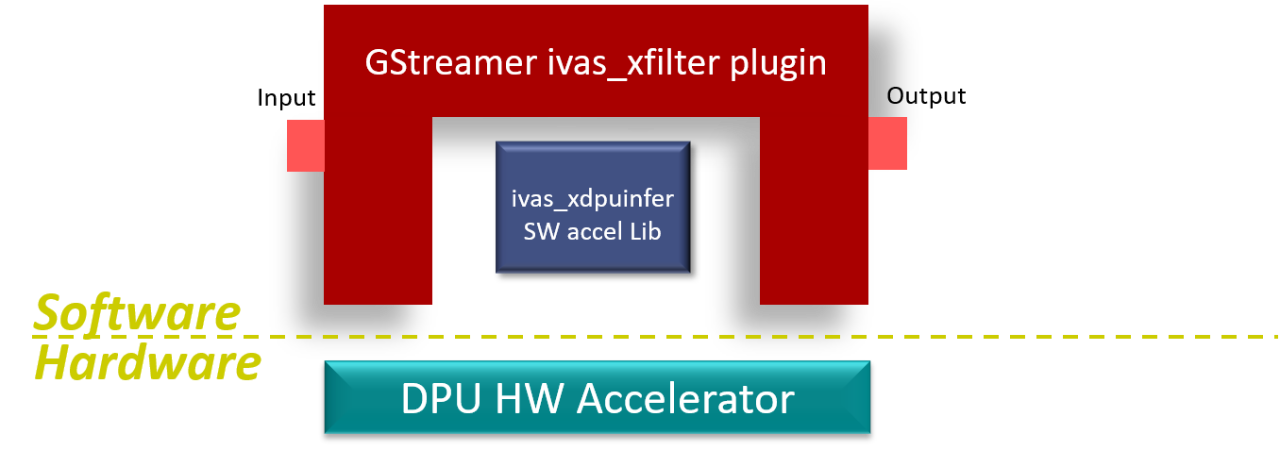

Machine learning Plugin: Ivas_xfilter

The machine learning block in Vitis Video Analytics SDK has at its heart the DPU, discussed earlier. it is controlled with the ivas_xfilter plugin. The ivas_xfilter block controls a piece of hardware, specifically the DPU, and it does that under the control of a software library called ivas_xdpuinfer.

Figure 8: ivas_xfilter plugin has HS and SW components

Some Vitis Video Analytics SDK plugins, including ivas_xfilter, require additional configuration information. This information is provided in a json file. Part of the plugin’s call-out in the pipeline includes a path to where the json file is, as we can see in the example pipeline:

! ivas_xfilter kernels-config="kernel_densebox_320_320.json"

The json file for “kernel_densebox_320_320.json looks like this:

{

"xclbin-location”:”XCLBIN_PATH ",

"ivas-library-repo": "/usr/lib/",

"element-mode":"inplace",

"kernels" :[ {

"library-name":"libivas_xdpuinfer.so",

"config": {

"model-name" : "densebox_320_320",

"model-class" : "FACEDETECT",

"model-format" : "BGR",

"model-path" : “…/vitis_ai_library/models/",

"run_time_model" : false,

"need_preprocess" : false,

"performance_test" : false,

"debug_level" : 1

}

}

]

}

For more information on the structure and contents of the json files, refer to the Vitis Video Analytics SDK documentation. Look here for more information on densebox_320_320, the model doing Face Detection.

Metadata Affixer: ivas_metaaffixer

In this example, we’re going to be displaying the video stream with an overlay. The scaled frames of video that are processed by the ML block might not be the best for display. To accommodate this, we fork the output of the VCU decoder block into two streams, one for the ML block and the other for the display. The ivas_metaaffixer converts the detection co-ordinates obtained by the ML model and maps them to the original video stream.

Ivas_metaaffixer runs entirely in software on the system processor.

Future versions of Vitis Video Analytics SDK will embed this functionality into ML and bounding box plugins.

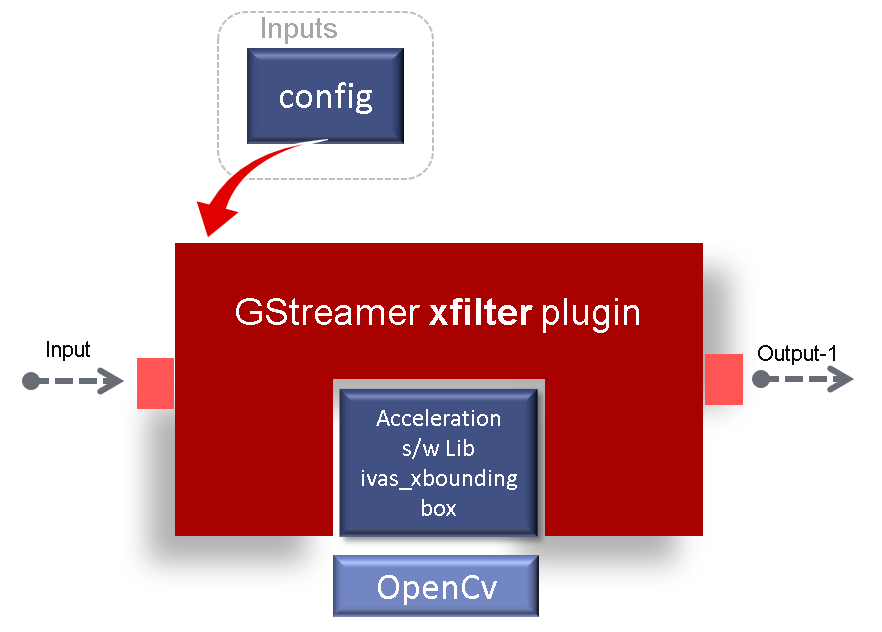

Bounding Box: ivas_xfilter

Ivas_xfilter, used in conjunction with the ivas_boundingbox software library, draws bounding boxes on the output of the ML block.

Figure 9: Bounding box functionality is used with ivas_xfilter and OpenCV calls

Bounding box functionality is accomplished using the ivas_xboundingbox SW library which, in turn, does OpenCV calls behind the scenes. Like the ivas_xfilter plugin that is doing machine learning, this ivas_xfilter plugin also uses a json file for configuration.

{

"xclbin-location":“XCLBIN_PATH",

"ivas-library-repo": "/usr/lib/",

"element-mode":"inplace",

"kernels" :[ {

"library-name":"libivas_xboundingbox.so",

"config": {

"model-name" : "densebox_320_320",

"display_output" : 2,

"font_size" : 4,

"font" : 1,

"thickness" : 4,

"y_offset" : 0,

"debug_level" : 1,

"label_color" : { "blue":0,"green":0,"red":255 },

"label_filter" : [ "class" ],

"classes" : [ ]

}

}

]

}

A future version of VVAS will have a dedicated bounding box plugin

Complete Pipeline

To run the pipeline shown in Figure 4 in Vitis Video Analytics SDK on a Xilinx board (assuming VVAS has been installed), we’d execute the following in a console window:

gst-launch-1.0 -v \

filesrc location=face_detect.mp4 \

! qtdemux ! h264parse ! omxh264dec internal-entropy-buffers=3 \

! tee name=t0 \

t0.src_0 \

! ivas_xabrscaler xclbin-location=dpu.xclbin kernel-name=v_multi_scaler:v_multi_scaler_1 \ alpha_r=128 alpha_g=128 alpha_b=128 \

! ivas_xfilter kernels-config="kernel_densebox_320_320.json" \

! scalem0.sink_master ivas_xmetaaffixer name=scalem0 scalem0.src_master ! fakesink \

t0.src_1 \

! scalem0.sink_slave_0 scalem0.src_slave_0 \

! ivas_xfilter kernels-config="boundingbox.json" \

! kmssink plane-id=34 bus-id="a0130000.v_mix"

As you can this is just GStreamer syntax, allowing you to string plugins together. A similar pipeline in DeepStream would be constructed similarly. So if you’re using NVIDIA DeepStream and you need a solution with additional functionality, perhaps lower latency or power, maybe some additional logic that runs too slow on the processor, then a Xilinx solution may be the way to go. And given that the functionality and use model of Vitis Video Analytics SDK is similar to DeepStream, migration will be an easy exercise for any SW developer.

Next Steps:

To try out Vitis Video Analytics SDK for yourself, simply navigate the product page. This will walk you through all the steps necessary to evaluate Vitis Video Analytics SDK for yourself.

About Craig Abramson

Craig Abramson has been with AMD close to 25 years, most of them as Field Applications Engineer. Now in marketing, he is passionate about gaining and sharing knowledge about machine learning and its applications at the edge. Outside of AMD he writes and plays music and, like so many others during the pandemic, started a music podcast that has so far been enjoyed by thousands of listeners around the globe.